The RiceNIC is suitable for research and education in networking applications. The hardware and software of the NIC can be modified to accomplish a wide variety of tasks. The following projects show how the RiceNIC can be used in both simple and complex applications. The first project, performing network address translation on the NIC, required only simple software modifications to the NIC firmware running on the embedded processor. The second project, involving virtualizing the NIC for operation in virtual machines, required both hardware modifications to the FPGA programming, and software modifications to the firmware, to successfully accomplish.

Firmware modifications only

In its most common configuration, NAT allows many systems on an internal network to share one address on an external network. A NAT device examines each TCP packet in-flight and transparently rewrites its source or destination port and address to preserve the appearance that only 1 device is attached to the external network. The NAT functionality adds per-packet overhead to the NIC in several places. The headers of incoming and outgoing packets must be processed and a mapping table searched to determine the correct forwarding or dropping action for the NAT to perform. Finally, after updating the packet header based on the search results, the IP and TCP checksums must be partially recalculated, and the packet transferred to the internal or external network.

The RiceNIC firmware running on the first PowerPC processor was modified to perform NAT services in which all internal nodes can initiate connections to the external network, but external nodes can only initiate connections to specific ports that are forwarded to internal servers. All traffic not belonging to either connection type is dropped. A server with one RiceNIC and one conventional NIC can then operate as a fully functioning NAT firewall. The RiceNIC is the interface to the external network and the conventional NIC is the interface to the internal network. IP forwarding is enabled on the host Linux system to forward all packets between the internal and external networks.

In this configuration, RiceNIC was able to sustain TCP stream throughput within 3% of the theoretical Ethernet limit for incoming and outgoing NAT traffic. The NAT processing code is running on the same PowerPC processor that performs all of the NIC management tasks, and that processor still has idle cycles remaining. In addition, the second PowerPC processor is completely idle and available for other tasks. While the implementation of a NAT module on a NIC is not a novel application, it shows how the RiceNIC firmware can easily be extended with new functionality and that substantial processing resources exist on the NIC for more advanced research. No hardware modifications were required for this project.

Hardware and software modifications

Virtual machine monitors (VMMs) allow multiple virtual machines running on the same physical machine to share hardware resources. To support networking, such VMMs must virtualize the machine’s network interfaces by presenting each virtual machine with a software interface that is multiplexed onto the actual physical NIC. The overhead of this software-based network virtualization severely limits network performance.

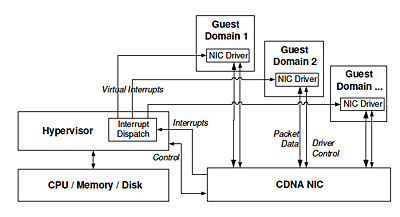

The RiceNIC was used in research with the Xen VMM that eliminated the performance limits of software multiplexing by providing each virtual machine safe direct access to the network interface. To accomplish this, network traffic multiplexing was performed directly on the RiceNIC, rather than in software on the host. This required both hardware and firmware modifications to RiceNIC. The new networking architecture is called Concurrent Direct Network Access (CDNA).

First, multiple contexts were added to the NIC hardware. A context is a set of unique memory locations, stored in SRAM, that can be written by the host using programmed I/O (PIO). These memory locations are used by the host to transfer control information to the network interface. A conventional network interface would only need a single context, but in order for the NIC to directly communicate with multiple guest operating systems in a virtual machine monitor, each guest needs its own unique context. Multiple contexts were created by modifying the FPGA memory controller to subdivide the single SRAM on the RiceNIC into multiple unique regions, and by extending the hardware event notification system to distinguish between contexts when signaling events to the embedded processor.

Second, the firmware running on the first PowerPC processor was modified to support virtualization. The extended firmware can independently communicate with the guest operating systems through the new hardware contexts, and the firmware now performs network traffic multiplexing and demultiplexing in software. This requires about 12 MB of additional NIC memory, which easily fits within the 256 MB DDR SODIMM supported by the RiceNIC. Even with all of the firmware modifications, it was still not necessary to use the second PowerPC processor on the RiceNIC.

Finally, the Xen hypervisor running on the host system was modified to communicate with the firmware to provide memory protection among the guest operating systems and ensure that they do not direct the RiceNIC to transfer data to or from physical memory that is not owned by that guest.

These modifications to the firmware and FPGA hardware of the RiceNIC resulted in significant networking performance improvements for virtual machine monitors. Both the system throughput with a single guest OS, and the system scaling as the number of guest OS’ was increased, were improved by significant margins. For example, with 24 guest operating systems, CDNA’s transmit bandwidth is a factor of 2.1 higher than Xen’s and CDNA’s receive bandwidth is a factor of 3.3 higher than Xen’s. These improvements would be difficult, if not impossible, to achieve with any other Ethernet network interface, and show the advantages of using the RiceNIC for research into future network interface architectures.

Download Paper [PDF]

Reference:

P. Willmann, J. Shafer, D. Carr, A. Menon, S. Rixner, A. L. Cox, and W. Zwaenepoel, Concurrent Direct Network Access for Virtual Machine Monitors, The International Symposium on High Performance Computer Architecture (HPCA), Phoenix, AZ, (February, 2007).